As AI systems become increasingly central to safety-critical domains—automotive, robotics, and industrial autonomy—the question is no longer whether to apply safety standards, but how those standards translate into real engineering practice.

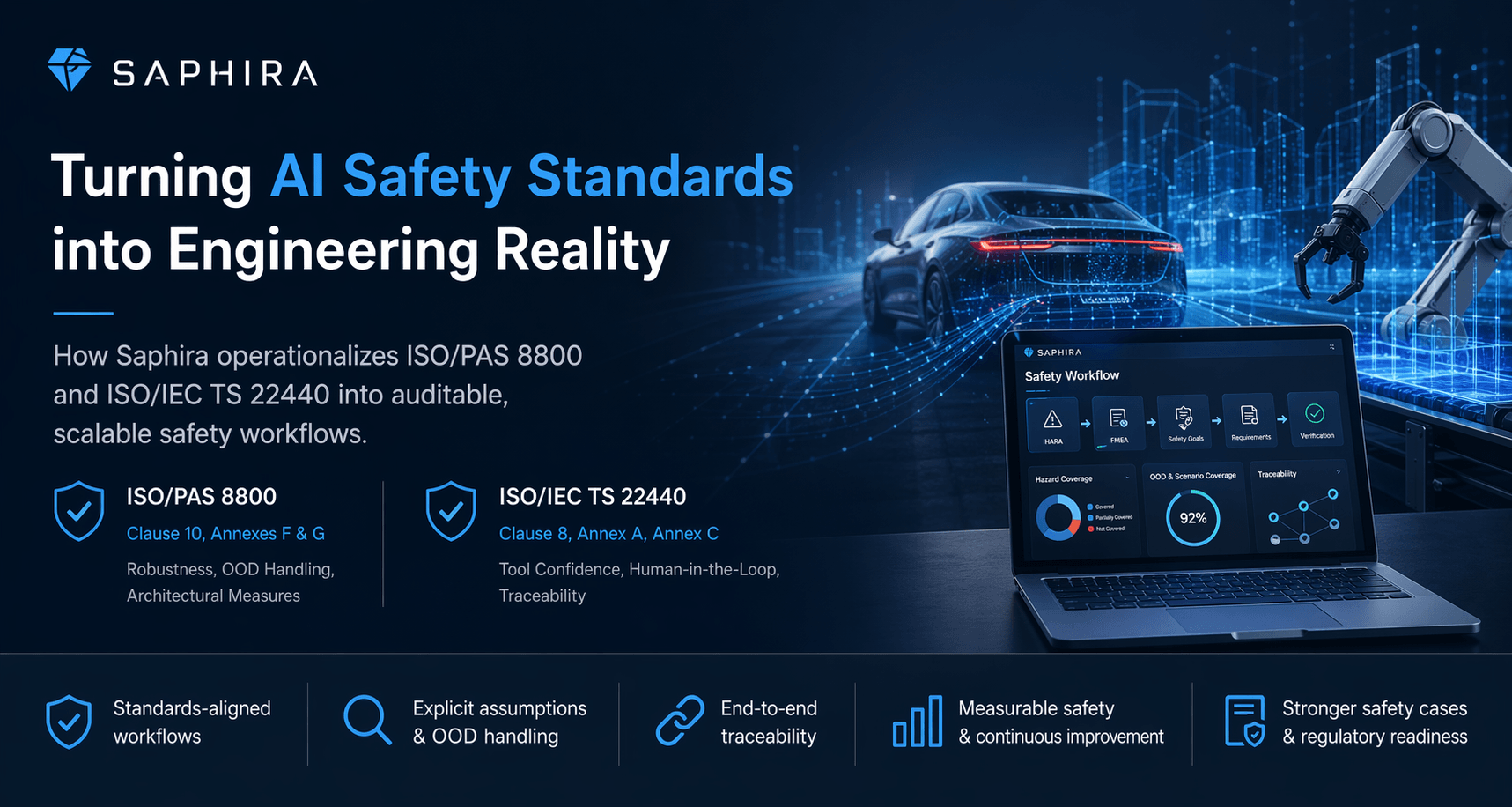

In a recent discussion with industry experts, we explored how guidance from ISO/PAS 8800 and ISO/IEC TS 22440 is actively influencing modern safety workflows, system architectures, and regulatory strategies.

The takeaway is clear:

These standards are not theoretical—they are actively reshaping how safety-critical AI systems are built, validated, and communicated.

1. From Standards to Structured Workflows

At Saphira, we don’t treat standards like ISO/PAS 8800 or ISO/IEC TS 22440 as standalone compliance checklists. Instead, we embed their principles directly into how safety workflows are structured and executed.

This includes:

- Human-in-the-loop approval gates for all safety-critical artefacts

- Full traceability across HARA, FMEA, and safety concepts

- Structured inputs and outputs, replacing ad hoc documentation

- Explicit handling of operational assumptions (ODD/OED)

This approach reflects core ideas from:

- ISO/IEC TS 22440 (Clause 8, Annex A, Annex C) — tool classification, error detection, and confidence in use

- ISO/PAS 8800 (Clause 10, Annexes F & G) — robustness, architectural measures, and handling of AI uncertainty

Rather than asking “Is AI allowed?”, the practical question becomes:

How do we constrain AI so that it operates within reviewable, auditable safety processes?

2. A Shift Toward Explicit Assumptions and OOD Awareness

One of the most significant impacts of ISO/PAS 8800—especially Clause 10 and Annexes F & G—is the emphasis on:

- Explicit system assumptions

- Operational design domain (ODD/OED) clarity

- Out-of-distribution (OOD) behavior

Historically, many of these elements were implicit. Today, they are becoming first-class engineering artefacts.

In practice, this means:

- Safety workflows now require clear documentation of system limits

- Teams systematically explore edge cases and OOD scenarios

- Perception systems are evaluated not just on accuracy, but on failure behavior outside training distribution

This is especially critical for:

- Autonomous vehicles

- Industrial robotics

- Perception-heavy systems

3. OOD Detection as a Safety and Audit Mechanism

A particularly important concept is out-of-distribution (OOD) detection.

In simple terms:

- In-distribution → scenarios the model was trained on

- Out-of-distribution → novel or unexpected inputs

OOD detection provides a mechanism to:

- Identify when the system is operating outside its known capabilities

- Trigger fallback or safe-state behavior

- Log events for audit and analysis

In real deployments, this has led to:

- Structured logging of interventions and edge cases

- Improved auditability of AI system behavior

- Use of OOD signals as a qualitative safety metric

In some cases, teams have reported significant reductions in incident rates (e.g., up to ~90%) through:

- Data collection during development

- Simulation-based testing and mitigation

- Iterative improvement cycles

These workflows align directly with the intent of ISO/PAS 8800.

4. Bridging AI Behavior to Safety Artefacts

A recurring challenge in AI safety is translating model behavior into:

- Hazards

- Safety goals

- Requirements

- Safety case evidence

Standards like ISO/PAS 8800 and TS 22440 are driving a shift toward:

- Systematic linkage between AI failure modes and hazard analysis

- Explicit reasoning about how uncertainty propagates into risk

- Stronger connections between:

- HARA

- FMEA

- Functional safety concepts

In practice, this results in:

- More consistent safety artefacts

- Earlier identification of gaps or missing mitigations

- Better alignment between engineering teams and assessors

5. Architectural Measures for Robustness

Annexes F and G of ISO/PAS 8800 emphasize architectural and development techniques for improving robustness.

These are already widely adopted in industry, including:

Architectural Measures

- Model ensembles

- Redundant perception pipelines

- Reject classes / uncertainty thresholds

Development Techniques

- Data augmentation

- Adversarial or fault-aware training

- Transfer learning

Explainability Methods

- Saliency maps

- Structural coverage metrics

- Model introspection techniques

While some of these are standard in ML engineering, their formalization in safety standards is driving:

- More consistent adoption

- Better auditability

- Stronger safety case arguments

6. Impact on Safety Cases and Regulatory Communication

Perhaps the most important shift is how these standards influence communication with regulators and assessors.

Key changes include:

- Safety cases now incorporate explicit assumptions and limitations

- OOD behavior is used to justify:

- System boundaries

- Residual risk

- Continuous improvement strategies

- Metrics and evidence are becoming more structured and auditable

As highlighted in the discussion:

Regulatory and legal processes increasingly require not just internal claims—but publicly defensible evidence of how standards are applied.

This is driving demand for:

- Clear documentation

- Measurable safety indicators

- Public-facing technical material

7. The Role of Tooling in Enabling This Shift

Finally, standards like ISO/IEC TS 22440 play a critical role in defining how tools—especially AI-assisted tools—can be safely used.

In practice, this means:

- Treating AI as an assistive tool (AUL-B1/B2)

- Implementing strong error detection mechanisms (AI-TD)

- Ensuring outputs are:

- Structured

- Reviewable

- Traceable

This allows organizations to:

- Scale safety workflows

- Reduce documentation burden

- Maintain compliance and auditability

🚀 Key Takeaway

Across automotive and industrial AI systems, we are seeing a clear shift:

- From implicit assumptions → explicit, documented constraints

- From manual enumeration → systematic exploration of edge cases

- From fragmented artefacts → traceable, structured safety workflows

Standards like ISO/PAS 8800 and ISO/IEC TS 22440 are not just influencing compliance—they are actively reshaping engineering practice.